Having security embedded by design into your architecture is more than just a best practice. It is how any one should actually start their work in any kind of project in public, private or hybrid cloud. Veeam Backup for Google Cloud (VBG) is one of the technologies that enables data security and resiliency by backing up and protecting your data running in the cloud. However, VBG is also residing in the same cloud and one of the first things is to make sure it is deployed and accessed in a secure manner.

The challenge rises from the need to access VBG console for configuration and operation activities. The focus of this post is securing this access.

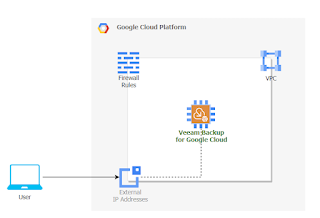

In a standard deployment you would have your VBG appliance installed in a VPC, apply firewall rules to restrict access to VBG and then using an SSL encrypted web browser connect to the console. This connectivity can be done over Internet or in some more complex scenarios over VPN or interconnect links. If you are connecting to VBG over Internet, you would need to expose VBG using a public IP address and restrict access to that IP address from your source IP. This is the use case that we are treating in our article. Another scenario using bastion servers and private connectivity is not treated now, however principals and mechanisms learned here can still apply.

As you can easily see there are some disadvantages in the having VBG directly accessed from Internet. First, VBG is directly accessed from Internet. Having a firewall rule that limits source IP addresses allowed to connect to the external IP address of VBG increases the security trust, but it does not apply zero trust principles. We don't know who is hiding behind that allowed source IP address. There is no user identification and authorization in place before allowing the user to open a session to VBG console. Anyone connecting from that specific source IP address is automatically trusted.

How can we make sure that whoever or whatever trying to connect to VBG is actually allowed to do it? Please mind that we are talking about the connection to VBG console before any authentication and authorization into VBG is applied. We want to make sure that whoever tries to enter credentials in VBG console is identified and has the permissions to do that action.

Think of use cases where your user has lost his rights to manage backups, however still has access to the backup infrastructure. You would want to have a secure and simple way of controlling that access and being able to easily revoke it. In this situation we can use Cloud Identity and Access Management (IAP) and Cloud Identity-Aware Proxy (IAP).

How does it work?

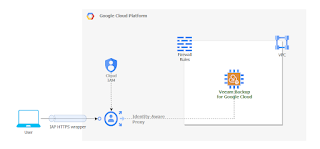

Cloud IAP implements TCP forwarding which encrypts any type of TCP traffic between the client initiating the session and IAP using HTTPS. In our case we normally connect to VBG console using HTTPS (web browser). Adding IAP TCP forwarding, the initial HTTPS traffic will be encrypted in another HTTPS connection. From IAP to VBG, the traffic will be sent without the additional layer of encryption. The purpose of using IAP is to keep VBG connected to private networks only and control which users can actually connect by using permissions and IAM users.

Public IP of VBG will be removed and if outbound connectivity is needed, then use a NAT gateway to enable it, but this is out of scope for the current post.

To summarize, instead of allowing anyone behind an IP address to connect to our VBG portal, we restrict this connectivity to specific IAM users. Additionally we keep VBG on a private network.

Guide

Start by preparing the project: enable Cloud Identity-Aware Proxy API. In the console

- APIs & Services > Enable APIs and Services

- search for Cloud Identity-Aware Proxy API and press enable.

Once enabled you will see it displayed in the list APIs

Allow IAP to connect to your VM by creating a firewall rule. In console go to VPC network > Firewall and press Create Firewall Rule

- name: allow-ingress-from-iap

- targets: Specifed target tags and select the tag of your VBG instance. We are using "vbg-europe" network tag. If you don't use network tags you can select "All instances in the network"

- source IPv4 ranges: Add the range 35.235.240.0/20 which contains all IP addresses that IAP uses for TCP forwarding.

- protocols and ports - specify the port you want to access - TCP 443

- press Save

Grant to users (or groups) permissions to use IAP TCP forwarding and specify to which instance to make it as restrictive as possible. Grant the roles/iap.tunnelResourceAccessor role to VBG instance by opening IAP admin page in console (Security > Identity-Aware Proxy). Go to SSH and TCP Resources page (you may ignore the OAuth warning).

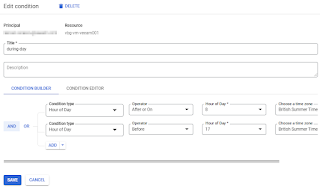

Select your VBG instance and press Add principal. Give to the IAM principal IAP-Secured Tunnel User role. You may want to restrict access to VBG to specific periods of time or days of the week. In this case add an IAM time based condition as seen in the example below.

Save the configuration and now you are ready to connect to your isolated VBG instance. On the machine where you want to initialize the connection you would need to have gcloud cli installed (Cloud SDK). Run the following command to open a TCP forwarding tunnel to VBG instance on port 443.

gcloud compute start-iap-tunnel your-vbg-instance-name 443 --local-host-port=localhost:0 --zone=your-instance-zone

When the tunnel is established you will see a message in the console with the local TCP port that is used for forwarding, similar to below image:

To be able to execute gcloud compute start-iap-tunnel you need to have compute.instances.get and compute.instances.list permissions on the project where VBG instance runs. You may grant the permissions to the users or groups using a custom role.

In case the user is not authorized in IAP or an IAM condition applied, then you will get the following message when trying to start the tunnel:

Finally it's time to open your browser, point it to the localhost and TCP port returned by gcloud command and connect to your VBG instance in the cloud:

The proposed solution is suitable for management and operations of VBG. However, please keep in mind that IAP TCP forwarding is not intended for bulk data data transfer. Also, IAP automatically disconnects sessions after one hour of inactivity.

In this post we've seen how to use Cloud IAP and Cloud IAM to enable secure access to Veeam Backup for Google Cloud console using zero trust architecture principals.